|

mlpack

blog

|

Variational Autoencoders - Week 11

I did some refactoring of the code in the PR for the models repository. I modified the generation scripts and played around with the learned distribution.

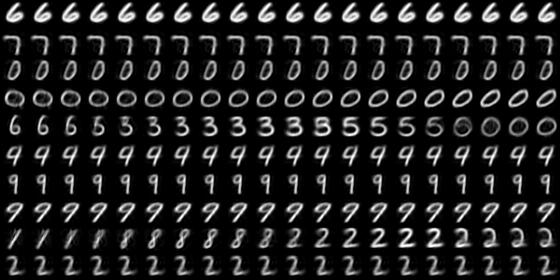

The following samples are the result of modifying each latent variable independently out of the 10.

As we can see, only the 3rd, 4th and 9th latent variable affect the generated data.

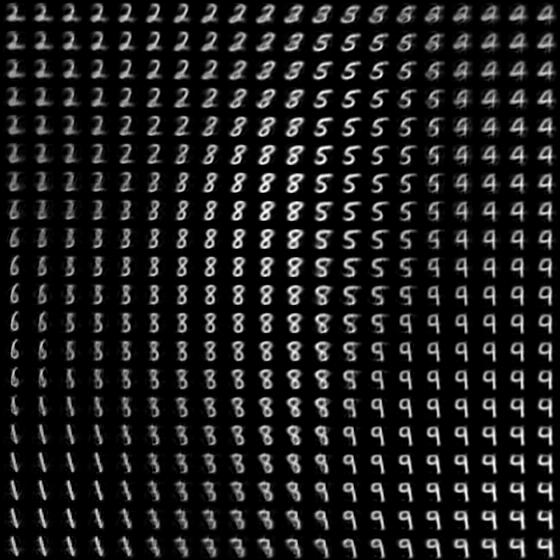

So, I took two of them, 3rd and 4th, and changed them in two dimensions. Here is how it looks.

The digits which can't be seen here are the ones that are dependent on the 9th latent variable. I tried it. Collectively, these three latent variables can generate all of MNIST.

I also did some cherry-picking and split the huge ReconstructionLoss PR. The NormalDistribution PR will be kept open until we can prove that it works. The BernoulliDistribution PR has been reviewed and needs to be merged for all the PRs.

For CVAEs(Conditional Variational Autoencoders), we will need to use Forward(), Backward() and Update() instead of Train() function as we need to append the labels to the output of the Reparametrization layer midway during the forward pass.

I am now putting a convolutional VAE model to train on the CelebA dataset, I think it's on this dataset that experiments with beta-VAEs and CVAEs can be really interesting.

I had trained a model on binary MNIST as well, here are the results. Sampling from the prior:

Sampling from the posterior:

Generated by

1.8.13

1.8.13